Table of Contents

- 1. Features

- I. Cloud Native Applications

- 2. Spring Cloud Context: Application Context Services

- 2.1. The Bootstrap Application Context

- 2.2. Application Context Hierarchies

- 2.3. Changing the Location of Bootstrap Properties

- 2.4. Overriding the Values of Remote Properties

- 2.5. Customizing the Bootstrap Configuration

- 2.6. Customizing the Bootstrap Property Sources

- 2.7. Logging Configuration

- 2.8. Environment Changes

- 2.9. Refresh Scope

- 2.10. Encryption and Decryption

- 2.11. Endpoints

- 3. Spring Cloud Commons: Common Abstractions

- 3.1. @EnableDiscoveryClient

- 3.2. ServiceRegistry

- 3.3. Spring RestTemplate as a Load Balancer Client

- 3.4. Spring WebClient as a Load Balancer Client

- 3.5. Multiple RestTemplate objects

- 3.6. Spring WebFlux WebClient as a Load Balancer Client

- 3.7. Ignore Network Interfaces

- 3.8. HTTP Client Factories

- 3.9. Enabled Features

- 3.10. Spring Cloud Compatibility Verification

- II. Spring Cloud Config

- 4. Quick Start

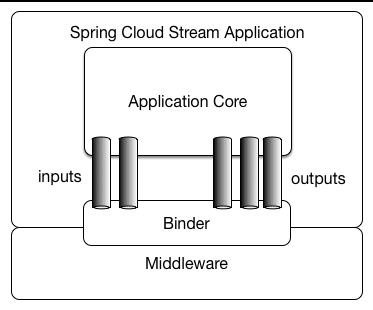

- 5. Spring Cloud Config Server

- 5.1. Environment Repository

- 5.1.1. Git Backend

- Skipping SSL Certificate Validation

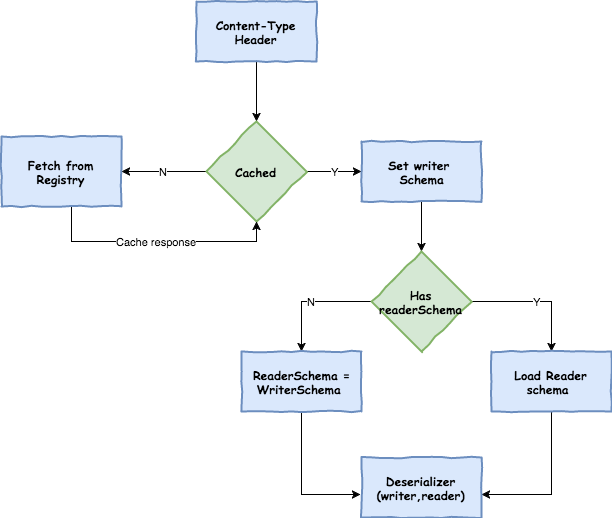

- Setting HTTP Connection Timeout

- Placeholders in Git URI

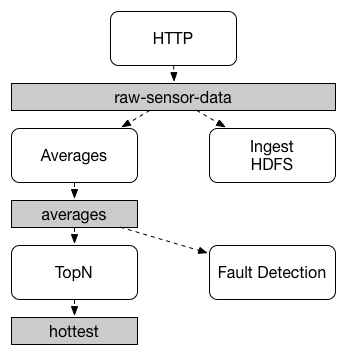

- Pattern Matching and Multiple Repositories

- Authentication

- Authentication with AWS CodeCommit

- Git SSH configuration using properties

- Placeholders in Git Search Paths

- Force pull in Git Repositories

- Deleting untracked branches in Git Repositories

- Git Refresh Rate

- 5.1.2. Version Control Backend Filesystem Use

- 5.1.3. File System Backend

- 5.1.4. Vault Backend

- 5.1.5. Accessing Backends Through a Proxy

- 5.1.6. Sharing Configuration With All Applications

- 5.1.7. JDBC Backend

- 5.1.8. CredHub Backend

- 5.1.9. Composite Environment Repositories

- 5.1.10. Property Overrides

- 5.2. Health Indicator

- 5.3. Security

- 5.4. Encryption and Decryption

- 5.5. Key Management

- 5.6. Creating a Key Store for Testing

- 5.7. Using Multiple Keys and Key Rotation

- 5.8. Serving Encrypted Properties

- 6. Serving Alternative Formats

- 7. Serving Plain Text

- 8. Embedding the Config Server

- 9. Push Notifications and Spring Cloud Bus

- 10. Spring Cloud Config Client

- III. Spring Cloud Netflix

- 11. Service Discovery: Eureka Clients

- 11.1. How to Include Eureka Client

- 11.2. Registering with Eureka

- 11.3. Authenticating with the Eureka Server

- 11.4. Status Page and Health Indicator

- 11.5. Registering a Secure Application

- 11.6. Eureka’s Health Checks

- 11.7. Eureka Metadata for Instances and Clients

- 11.8. Using the EurekaClient

- 11.9. Alternatives to the Native Netflix EurekaClient

- 11.10. Why Is It so Slow to Register a Service?

- 11.11. Zones

- 12. Service Discovery: Eureka Server

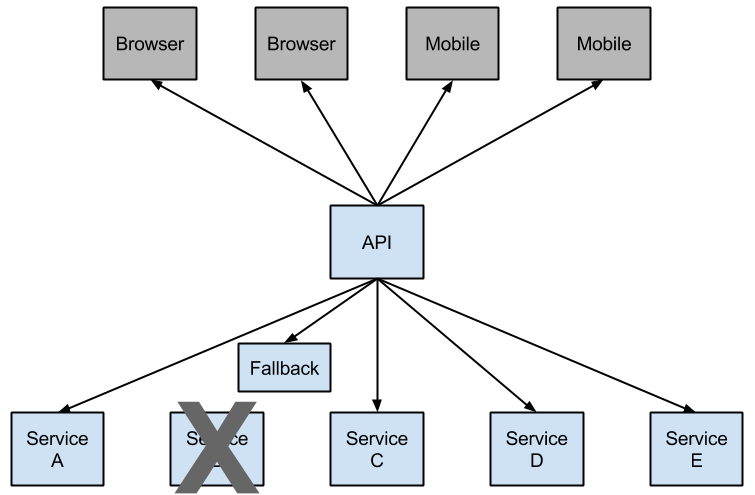

- 13. Circuit Breaker: Hystrix Clients

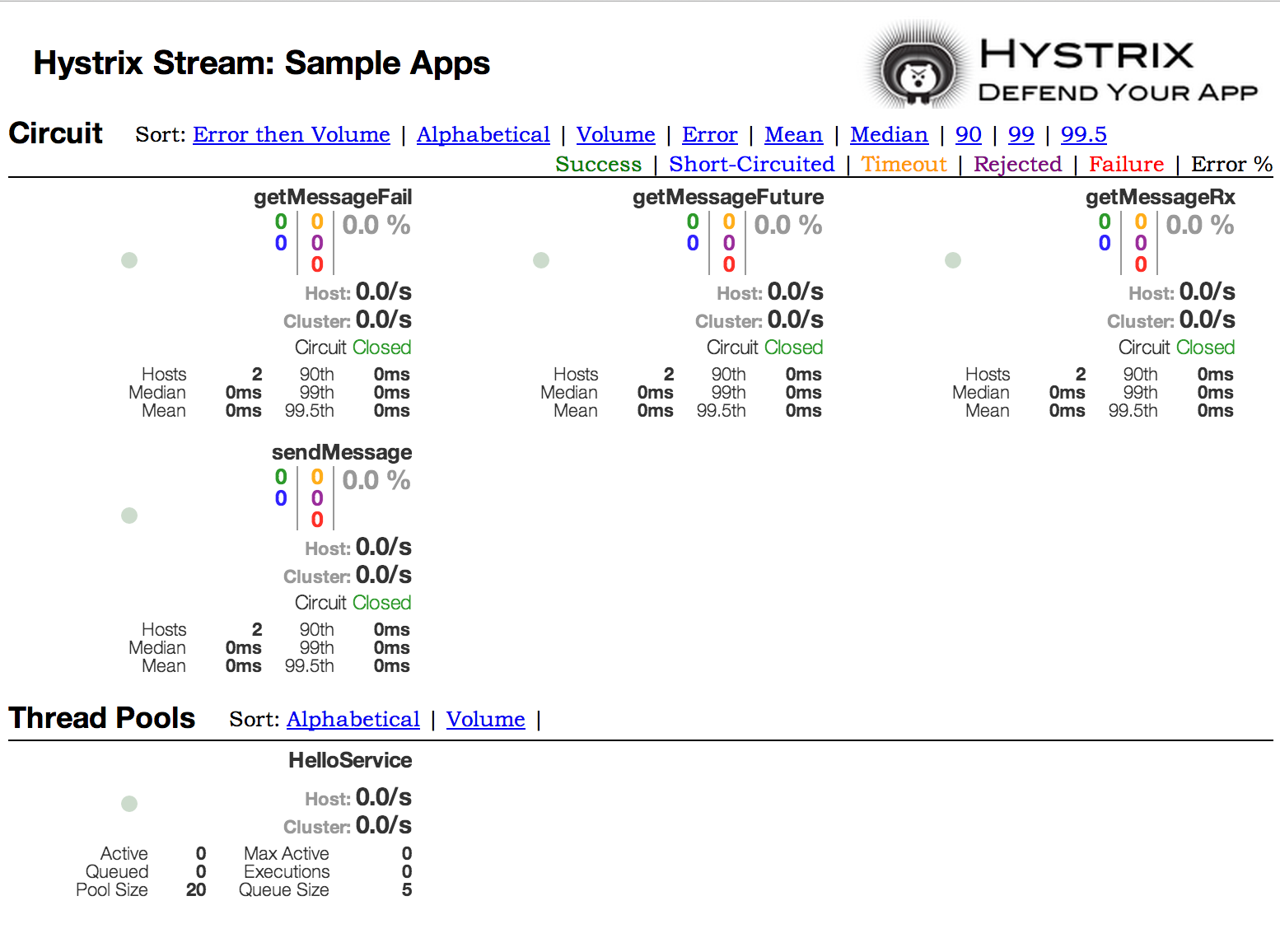

- 14. Circuit Breaker: Hystrix Dashboard

- 15. Hystrix Timeouts And Ribbon Clients

- 16. Client Side Load Balancer: Ribbon

- 16.1. How to Include Ribbon

- 16.2. Customizing the Ribbon Client

- 16.3. Customizing the Default for All Ribbon Clients

- 16.4. Customizing the Ribbon Client by Setting Properties

- 16.5. Using Ribbon with Eureka

- 16.6. Example: How to Use Ribbon Without Eureka

- 16.7. Example: Disable Eureka Use in Ribbon

- 16.8. Using the Ribbon API Directly

- 16.9. Caching of Ribbon Configuration

- 16.10. How to Configure Hystrix Thread Pools

- 16.11. How to Provide a Key to Ribbon’s

IRule

- 17. External Configuration: Archaius

- 18. Router and Filter: Zuul

- 18.1. How to Include Zuul

- 18.2. Embedded Zuul Reverse Proxy

- 18.3. Zuul Http Client

- 18.4. Cookies and Sensitive Headers

- 18.5. Ignored Headers

- 18.6. Management Endpoints

- 18.7. Strangulation Patterns and Local Forwards

- 18.8. Uploading Files through Zuul

- 18.9. Query String Encoding

- 18.10. Request URI Encoding

- 18.11. Plain Embedded Zuul

- 18.12. Disable Zuul Filters

- 18.13. Providing Hystrix Fallbacks For Routes

- 18.14. Zuul Timeouts

- 18.15. Rewriting the

Locationheader - 18.16. Enabling Cross Origin Requests

- 18.17. Metrics

- 18.18. Zuul Developer Guide

- 19. Polyglot support with Sidecar

- 20. Retrying Failed Requests

- 21. HTTP Clients

- 22. Modules In Maintenance Mode

- IV. Spring Cloud OpenFeign

- V. Spring Cloud Stream

- 24. A Brief History of Spring’s Data Integration Journey

- 25. Quick Start

- 26. What’s New in 2.0?

- 27. Introducing Spring Cloud Stream

- 28. Main Concepts

- 29. Programming Model

- 30. Binders

- 31. Configuration Options

- 32. Content Type Negotiation

- 33. Schema Evolution Support

- 34. Inter-Application Communication

- 35. Testing

- 36. Health Indicator

- 37. Metrics Emitter

- 38. Samples

- VI. Binder Implementations

- 39. Apache Kafka Binder

- 40. Apache Kafka Streams Binder

- 40.1. Usage

- 40.2. Kafka Streams Binder Overview

- 40.3. Configuration Options

- 40.4. Multiple Input Bindings

- 40.5. Multiple Output Bindings (aka Branching)

- 40.6. Message Conversion

- 40.7. Error Handling

- 40.8. State Store

- 40.9. Interactive Queries

- 40.10. Accessing the underlying KafkaStreams object

- 40.11. State Cleanup

- 41. RabbitMQ Binder

- VII. Spring Cloud Bus

- VIII. Spring Cloud Sleuth

- 50. Introduction

- 51. Additional Resources

- 52. Features

- 53. Sampling

- 54. Propagation

- 55. Current Tracing Component

- 56. Current Span

- 57. Instrumentation

- 58. Span lifecycle

- 59. Naming spans

- 60. Managing Spans with Annotations

- 61. Customizations

- 62. Sending Spans to Zipkin

- 63. Zipkin Stream Span Consumer

- 64. Integrations

- 65. Running examples

- IX. Spring Cloud Consul

- 66. Install Consul

- 67. Consul Agent

- 68. Service Discovery with Consul

- 69. Distributed Configuration with Consul

- 70. Consul Retry

- 71. Spring Cloud Bus with Consul

- 72. Circuit Breaker with Hystrix

- 73. Hystrix metrics aggregation with Turbine and Consul

- X. Spring Cloud Zookeeper

- 74. Install Zookeeper

- 75. Service Discovery with Zookeeper

- 76. Using Spring Cloud Zookeeper with Spring Cloud Netflix Components

- 77. Spring Cloud Zookeeper and Service Registry

- 78. Zookeeper Dependencies

- 79. Spring Cloud Zookeeper Dependency Watcher

- 80. Distributed Configuration with Zookeeper

- XI. Spring Cloud Security

- XII. Spring Cloud for Cloud Foundry

- XIII. Spring Cloud Contract

- 86. Spring Cloud Contract

- 87. Spring Cloud Contract Verifier Introduction

- 88. Spring Cloud Contract FAQ

- 88.1. Why use Spring Cloud Contract Verifier and not X ?

- 88.2. I don’t want to write a contract in Groovy!

- 88.3. What is this value(consumer(), producer()) ?

- 88.4. How to do Stubs versioning?

- 88.5. Common repo with contracts

- 88.6. Do I need a Binary Storage? Can’t I use Git?

- 88.7. Can I use the Pact Broker?

- 88.8. How can I debug the request/response being sent by the generated tests client?

- 89. Spring Cloud Contract Verifier Setup

- 89.1. Gradle Project

- 89.1.1. Prerequisites

- 89.1.2. Add Gradle Plugin with Dependencies

- 89.1.3. Gradle and Rest Assured 2.0

- 89.1.4. Snapshot Versions for Gradle

- 89.1.5. Add stubs

- 89.1.6. Run the Plugin

- 89.1.7. Default Setup

- 89.1.8. Configure Plugin

- 89.1.9. Configuration Options

- 89.1.10. Single Base Class for All Tests

- 89.1.11. Different Base Classes for Contracts

- 89.1.12. Invoking Generated Tests

- 89.1.13. Pushing stubs to SCM

- 89.1.14. Spring Cloud Contract Verifier on the Consumer Side

- 89.2. Maven Project

- 89.2.1. Add maven plugin

- 89.2.2. Maven and Rest Assured 2.0

- 89.2.3. Snapshot versions for Maven

- 89.2.4. Add stubs

- 89.2.5. Run plugin

- 89.2.6. Configure plugin

- 89.2.7. Configuration Options

- 89.2.8. Single Base Class for All Tests

- 89.2.9. Different base classes for contracts

- 89.2.10. Invoking generated tests

- 89.2.11. Pushing stubs to SCM

- 89.2.12. Maven Plugin and STS

- 89.2.13. Maven Plugin with Spock Tests

- 89.3. Stubs and Transitive Dependencies

- 89.4. Scenarios

- 89.5. Docker Project

- 90. Spring Cloud Contract Verifier Messaging

- 91. Spring Cloud Contract Stub Runner

- 92. Stub Runner for Messaging

- 93. Contract DSL

- 93.1. Limitations

- 93.2. Common Top-Level elements

- 93.3. Request

- 93.4. Response

- 93.5. Dynamic properties

- 93.5.1. Dynamic properties inside the body

- 93.5.2. Regular expressions

- 93.5.3. Passing Optional Parameters

- 93.5.4. Executing Custom Methods on the Server Side

- 93.5.5. Referencing the Request from the Response

- 93.5.6. Registering Your Own WireMock Extension

- 93.5.7. Dynamic Properties in the Matchers Sections

- 93.6. JAX-RS Support

- 93.7. Async Support

- 93.8. Working with Context Paths

- 93.9. Working with WebFlux

- 93.10. XML Support for REST

- 93.11. Messaging Top-Level Elements

- 93.12. Multiple Contracts in One File

- 93.13. Generating Spring REST Docs snippets from the contracts

- 94. Customization

- 95. Using the Pluggable Architecture

- 96. Spring Cloud Contract WireMock

- 96.1. Registering Stubs Automatically

- 96.2. Using Files to Specify the Stub Bodies

- 96.3. Alternative: Using JUnit Rules

- 96.4. Relaxed SSL Validation for Rest Template

- 96.5. WireMock and Spring MVC Mocks

- 96.6. Customization of WireMock configuration

- 96.7. Generating Stubs using REST Docs

- 96.8. Generating Contracts by Using REST Docs

- 97. Migrations

- 98. Links

- XIV. Spring Cloud Vault

- 99. Quick Start

- 100. Client Side Usage

- 101. Authentication methods

- 102. GCP-GCE authentication

- 103. GCP-IAM authentication

- 104. Secret Backends

- 105. Database backends

- 106. Configure

PropertySourceLocatorbehavior - 107. Service Registry Configuration

- 108. Vault Client Fail Fast

- 109. Vault Client SSL configuration

- 110. Lease lifecycle management (renewal and revocation)

- XV. Spring Cloud Gateway

- 111. How to Include Spring Cloud Gateway

- 112. Glossary

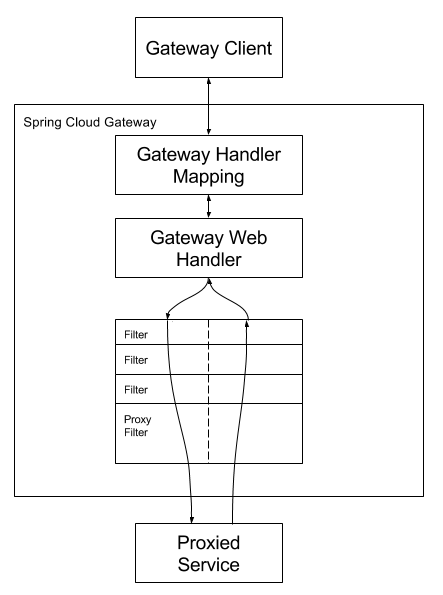

- 113. How It Works

- 114. Route Predicate Factories

- 114.1. After Route Predicate Factory

- 114.2. Before Route Predicate Factory

- 114.3. Between Route Predicate Factory

- 114.4. Cookie Route Predicate Factory

- 114.5. Header Route Predicate Factory

- 114.6. Host Route Predicate Factory

- 114.7. Method Route Predicate Factory

- 114.8. Path Route Predicate Factory

- 114.9. Query Route Predicate Factory

- 114.10. RemoteAddr Route Predicate Factory

- 115. GatewayFilter Factories

- 115.1. AddRequestHeader GatewayFilter Factory

- 115.2. AddRequestParameter GatewayFilter Factory

- 115.3. AddResponseHeader GatewayFilter Factory

- 115.4. Hystrix GatewayFilter Factory

- 115.5. FallbackHeaders GatewayFilter Factory

- 115.6. PrefixPath GatewayFilter Factory

- 115.7. PreserveHostHeader GatewayFilter Factory

- 115.8. RequestRateLimiter GatewayFilter Factory

- 115.9. RedirectTo GatewayFilter Factory

- 115.10. RemoveNonProxyHeaders GatewayFilter Factory

- 115.11. RemoveRequestHeader GatewayFilter Factory

- 115.12. RemoveResponseHeader GatewayFilter Factory

- 115.13. RewritePath GatewayFilter Factory

- 115.14. RewriteResponseHeader GatewayFilter Factory

- 115.15. SaveSession GatewayFilter Factory

- 115.16. SecureHeaders GatewayFilter Factory

- 115.17. SetPath GatewayFilter Factory

- 115.18. SetResponseHeader GatewayFilter Factory

- 115.19. SetStatus GatewayFilter Factory

- 115.20. StripPrefix GatewayFilter Factory

- 115.21. Retry GatewayFilter Factory

- 115.22. RequestSize GatewayFilter Factory

- 115.23. Modify Request Body GatewayFilter Factory

- 115.24. Modify Response Body GatewayFilter Factory

- 116. Global Filters

- 116.1. Combined Global Filter and GatewayFilter Ordering

- 116.2. Forward Routing Filter

- 116.3. LoadBalancerClient Filter

- 116.4. Netty Routing Filter

- 116.5. Netty Write Response Filter

- 116.6. RouteToRequestUrl Filter

- 116.7. Websocket Routing Filter

- 116.8. Gateway Metrics Filter

- 116.9. Making An Exchange As Routed

- 117. TLS / SSL

- 118. Configuration

- 119. Reactor Netty Access Logs

- 120. CORS Configuration

- 121. Actuator API

- 122. Developer Guide

- 123. Building a Simple Gateway Using Spring MVC or Webflux

- XVI. Spring Cloud Function

- 124. Introduction

- 125. Getting Started

- 126. Building and Running a Function

- 127. Function Catalog and Flexible Function Signatures

- 128. Standalone Web Applications

- 129. Standalone Streaming Applications

- 130. Deploying a Packaged Function

- 131. Functional Bean Definitions

- 132. Dynamic Compilation

- 133. Serverless Platform Adapters

- XVII. Spring Cloud Kubernetes

- 134. Why do you need Spring Cloud Kubernetes?

- 135. DiscoveryClient for Kubernetes

- 136. Kubernetes native service discovery

- 137. Kubernetes PropertySource implementations

- 138. Ribbon discovery in Kubernetes

- 139. Kubernetes Ecosystem Awareness

- 140. Pod Health Indicator

- 141. Leader Election

- 142. Security Configurations inside Kubernetes

- 143. Examples

- 144. Other Resources

- 145. Building

- 146. Contributing

- XVIII. Spring Cloud GCP

- 147. Introduction

- 148. Dependency Management

- 149. Getting started

- 150. Spring Cloud GCP Core

- 151. Google Cloud Pub/Sub

- 152. Spring Resources

- 153. Spring JDBC

- 154. Spring Integration

- 155. Spring Cloud Stream

- 156. Spring Cloud Sleuth

- 157. Stackdriver Logging

- 158. Spring Cloud Config

- 159. Spring Data Cloud Spanner

- 160. Spring Data Cloud Datastore

- 161. Cloud Memorystore for Redis

- 162. Cloud Identity-Aware Proxy (IAP) Authentication

- 163. Google Cloud Vision

- 164. Cloud Foundry

- 165. Kotlin Support

- 166. Sample

- XIX. Appendix: Compendium of Configuration Properties

Spring Cloud provides tools for developers to quickly build some of the common patterns in distributed systems (e.g. configuration management, service discovery, circuit breakers, intelligent routing, micro-proxy, control bus). Coordination of distributed systems leads to boiler plate patterns, and using Spring Cloud developers can quickly stand up services and applications that implement those patterns. They will work well in any distributed environment, including the developer’s own laptop, bare metal data centres, and managed platforms such as Cloud Foundry.

Version: 1.0.0.BUILD-SNAPSHOT

Spring Cloud focuses on providing good out of box experience for typical use cases and extensibility mechanism to cover others.

- Distributed/versioned configuration

- Service registration and discovery

- Routing

- Service-to-service calls

- Load balancing

- Circuit Breakers

- Distributed messaging

Cloud Native is a style of application development that encourages easy adoption of best practices in the areas of continuous delivery and value-driven development. A related discipline is that of building 12-factor Applications, in which development practices are aligned with delivery and operations goals — for instance, by using declarative programming and management and monitoring. Spring Cloud facilitates these styles of development in a number of specific ways. The starting point is a set of features to which all components in a distributed system need easy access.

Many of those features are covered by Spring Boot, on which Spring Cloud builds. Some more features are delivered by Spring Cloud as two libraries: Spring Cloud Context and Spring Cloud Commons.

Spring Cloud Context provides utilities and special services for the ApplicationContext of a Spring Cloud application (bootstrap context, encryption, refresh scope, and environment endpoints). Spring Cloud Commons is a set of abstractions and common classes used in different Spring Cloud implementations (such as Spring Cloud Netflix and Spring Cloud Consul).

If you get an exception due to "Illegal key size" and you use Sun’s JDK, you need to install the Java Cryptography Extension (JCE) Unlimited Strength Jurisdiction Policy Files. See the following links for more information:

Extract the files into the JDK/jre/lib/security folder for whichever version of JRE/JDK x64/x86 you use.

![[Note]](images/note.png) | Note |

|---|---|

Spring Cloud is released under the non-restrictive Apache 2.0 license. If you would like to contribute to this section of the documentation or if you find an error, you can find the source code and issue trackers for the project at github. |

Spring Boot has an opinionated view of how to build an application with Spring. For instance, it has conventional locations for common configuration files and has endpoints for common management and monitoring tasks. Spring Cloud builds on top of that and adds a few features that probably all components in a system would use or occasionally need.

A Spring Cloud application operates by creating a “bootstrap” context, which is a parent context for the main application.

It is responsible for loading configuration properties from the external sources and for decrypting properties in the local external configuration files.

The two contexts share an Environment, which is the source of external properties for any Spring application.

By default, bootstrap properties (not bootstrap.properties but properties that are loaded during the bootstrap phase) are added with high precedence, so they cannot be overridden by local configuration.

The bootstrap context uses a different convention for locating external configuration than the main application context.

Instead of application.yml (or .properties), you can use bootstrap.yml, keeping the external configuration for bootstrap and main context

nicely separate.

The following listing shows an example:

bootstrap.yml.

spring:

application:

name: foo

cloud:

config:

uri: ${SPRING_CONFIG_URI:http://localhost:8888}

If your application needs any application-specific configuration from the server, it is a good idea to set the spring.application.name (in bootstrap.yml or application.yml).

You can disable the bootstrap process completely by setting spring.cloud.bootstrap.enabled=false (for example, in system properties).

If you build an application context from SpringApplication or SpringApplicationBuilder, then the Bootstrap context is added as a parent to that context.

It is a feature of Spring that child contexts inherit property sources and profiles from their parent, so the “main” application context contains additional property sources, compared to building the same context without Spring Cloud Config.

The additional property sources are:

- “bootstrap”: If any

PropertySourceLocatorsare found in the Bootstrap context and if they have non-empty properties, an optionalCompositePropertySourceappears with high priority. An example would be properties from the Spring Cloud Config Server. See “Section 2.6, “Customizing the Bootstrap Property Sources”” for instructions on how to customize the contents of this property source. - “applicationConfig: [classpath:bootstrap.yml]” (and related files if Spring profiles are active): If you have a

bootstrap.yml(or.properties), those properties are used to configure the Bootstrap context. Then they get added to the child context when its parent is set. They have lower precedence than theapplication.yml(or.properties) and any other property sources that are added to the child as a normal part of the process of creating a Spring Boot application. See “Section 2.3, “Changing the Location of Bootstrap Properties”” for instructions on how to customize the contents of these property sources.

Because of the ordering rules of property sources, the “bootstrap” entries take precedence.

However, note that these do not contain any data from bootstrap.yml, which has very low precedence but can be used to set defaults.

You can extend the context hierarchy by setting the parent context of any ApplicationContext you create — for example, by using its own interface or with the SpringApplicationBuilder convenience methods (parent(), child() and sibling()).

The bootstrap context is the parent of the most senior ancestor that you create yourself.

Every context in the hierarchy has its own “bootstrap” (possibly empty) property source to avoid promoting values inadvertently from parents down to their descendants.

If there is a Config Server, every context in the hierarchy can also (in principle) have a different spring.application.name and, hence, a different remote property source.

Normal Spring application context behavior rules apply to property resolution: properties from a child context override those in

the parent, by name and also by property source name.

(If the child has a property source with the same name as the parent, the value from the parent is not included in the child).

Note that the SpringApplicationBuilder lets you share an Environment amongst the whole hierarchy, but that is not the default.

Thus, sibling contexts, in particular, do not need to have the same profiles or property sources, even though they may share common values with their parent.

The bootstrap.yml (or .properties) location can be specified by setting spring.cloud.bootstrap.name (default: bootstrap) or spring.cloud.bootstrap.location (default: empty) — for example, in System properties.

Those properties behave like the spring.config.* variants with the same name.

In fact, they are used to set up the bootstrap ApplicationContext by setting those properties in its Environment.

If there is an active profile (from spring.profiles.active or through the Environment API in the

context you are building), properties in that profile get loaded as well, the same as in a regular Spring Boot app — for example, from bootstrap-development.properties for a development profile.

The property sources that are added to your application by the bootstrap context are often “remote” (from example, from Spring Cloud Config Server).

By default, they cannot be overridden locally.

If you want to let your applications override the remote properties with their own System properties or config files, the remote property source has to grant it permission by setting spring.cloud.config.allowOverride=true (it does not work to set this locally).

Once that flag is set, two finer-grained settings control the location of the remote properties in relation to system properties and the application’s local configuration:

spring.cloud.config.overrideNone=true: Override from any local property source.spring.cloud.config.overrideSystemProperties=false: Only system properties, command line arguments, and environment variables (but not the local config files) should override the remote settings.

The bootstrap context can be set to do anything you like by adding entries to /META-INF/spring.factories under a key named org.springframework.cloud.bootstrap.BootstrapConfiguration.

This holds a comma-separated list of Spring @Configuration classes that are used to create the context.

Any beans that you want to be available to the main application context for autowiring can be created here.

There is a special contract for @Beans of type ApplicationContextInitializer.

If you want to control the startup sequence, classes can be marked with an @Order annotation (the default order is last).

![[Warning]](images/warning.png) | Warning |

|---|---|

When adding custom |

The bootstrap process ends by injecting initializers into the main SpringApplication instance (which is the normal Spring Boot startup sequence, whether it is running as a standalone application or deployed in an application server).

First, a bootstrap context is created from the classes found in spring.factories.

Then, all @Beans of type ApplicationContextInitializer are added to the main SpringApplication before it is started.

The default property source for external configuration added by the bootstrap process is the Spring Cloud Config Server, but you can add additional sources by adding beans of type PropertySourceLocator to the bootstrap context (through spring.factories).

For instance, you can insert additional properties from a different server or from a database.

As an example, consider the following custom locator:

@Configuration public class CustomPropertySourceLocator implements PropertySourceLocator { @Override public PropertySource<?> locate(Environment environment) { return new MapPropertySource("customProperty", Collections.<String, Object>singletonMap("property.from.sample.custom.source", "worked as intended")); } }

The Environment that is passed in is the one for the ApplicationContext about to be created — in other words, the one for which we supply additional property sources for.

It already has its normal Spring Boot-provided property sources, so you can use those to locate a property source specific to this Environment (for example, by keying it on spring.application.name, as is done in the default Spring Cloud Config Server property source locator).

If you create a jar with this class in it and then add a META-INF/spring.factories containing the following, the customProperty PropertySource appears in any application that includes that jar on its classpath:

org.springframework.cloud.bootstrap.BootstrapConfiguration=sample.custom.CustomPropertySourceLocator

If you are going to use Spring Boot to configure log settings than you should place this configuration in `bootstrap.[yml | properties] if you would like it to apply to all events.

![[Note]](images/note.png) | Note |

|---|---|

For Spring Cloud to initialize logging configuration properly you cannot use a custom prefix. For example,

using |

The application listens for an EnvironmentChangeEvent and reacts to the change in a couple of standard ways (additional ApplicationListeners can be added as @Beans by the user in the normal way).

When an EnvironmentChangeEvent is observed, it has a list of key values that have changed, and the application uses those to:

- Re-bind any

@ConfigurationPropertiesbeans in the context - Set the logger levels for any properties in

logging.level.*

Note that the Config Client does not, by default, poll for changes in the Environment.

Generally, we would not recommend that approach for detecting changes (although you could set it up with a

@Scheduled annotation).

If you have a scaled-out client application, it is better to broadcast the EnvironmentChangeEvent to all the instances instead of having them polling for changes (for example, by using the Spring Cloud Bus).

The EnvironmentChangeEvent covers a large class of refresh use cases, as long as you can actually make a change to the Environment and publish the event.

Note that those APIs are public and part of core Spring).

You can verify that the changes are bound to @ConfigurationProperties beans by visiting the /configprops endpoint (a normal Spring Boot Actuator feature).

For instance, a DataSource can have its maxPoolSize changed at runtime (the default DataSource created by Spring Boot is an @ConfigurationProperties bean) and grow capacity dynamically.

Re-binding @ConfigurationProperties does not cover another large class of use cases, where you need more control over the refresh and where you need a change to be atomic over the whole ApplicationContext.

To address those concerns, we have @RefreshScope.

When there is a configuration change, a Spring @Bean that is marked as @RefreshScope gets special treatment.

This feature addresses the problem of stateful beans that only get their configuration injected when they are initialized.

For instance, if a DataSource has open connections when the database URL is changed via the Environment, you probably want the holders of those connections to be able to complete what they are doing.

Then, the next time something borrows a connection from the pool, it gets one with the new URL.

Sometimes, it might even be mandatory to apply the @RefreshScope

annotation on some beans which can be only initialized once. If a bean

is "immutable", you will have to either annotate the bean with @RefreshScope

or specify the classname under the property key

spring.cloud.refresh.extra-refreshable.

![[Important]](images/important.png) | Important |

|---|---|

If you create a |

Refresh scope beans are lazy proxies that initialize when they are used (that is, when a method is called), and the scope acts as a cache of initialized values. To force a bean to re-initialize on the next method call, you must invalidate its cache entry.

The RefreshScope is a bean in the context and has a public refreshAll() method to refresh all beans in the scope by clearing the target cache.

The /refresh endpoint exposes this functionality (over HTTP or JMX).

To refresh an individual bean by name, there is also a refresh(String) method.

To expose the /refresh endpoint, you need to add following configuration to your application:

management: endpoints: web: exposure: include: refresh

![[Note]](images/note.png) | Note |

|---|---|

|

Spring Cloud has an Environment pre-processor for decrypting property values locally.

It follows the same rules as the Config Server and has the same external configuration through encrypt.*.

Thus, you can use encrypted values in the form of {cipher}* and, as long as there is a valid key, they are decrypted before the main application context gets the Environment settings.

To use the encryption features in an application, you need to include Spring Security RSA in your classpath (Maven co-ordinates: "org.springframework.security:spring-security-rsa"), and you also need the full strength JCE extensions in your JVM.

If you get an exception due to "Illegal key size" and you use Sun’s JDK, you need to install the Java Cryptography Extension (JCE) Unlimited Strength Jurisdiction Policy Files. See the following links for more information:

Extract the files into the JDK/jre/lib/security folder for whichever version of JRE/JDK x64/x86 you use.

For a Spring Boot Actuator application, some additional management endpoints are available. You can use:

POSTto/actuator/envto update theEnvironmentand rebind@ConfigurationPropertiesand log levels./actuator/refreshto re-load the boot strap context and refresh the@RefreshScopebeans./actuator/restartto close theApplicationContextand restart it (disabled by default)./actuator/pauseand/actuator/resumefor calling theLifecyclemethods (stop()andstart()on theApplicationContext).

![[Note]](images/note.png) | Note |

|---|---|

If you disable the |

Patterns such as service discovery, load balancing, and circuit breakers lend themselves to a common abstraction layer that can be consumed by all Spring Cloud clients, independent of the implementation (for example, discovery with Eureka or Consul).

Spring Cloud Commons provides the @EnableDiscoveryClient annotation.

This looks for implementations of the DiscoveryClient interface with META-INF/spring.factories.

Implementations of the Discovery Client add a configuration class to spring.factories under the org.springframework.cloud.client.discovery.EnableDiscoveryClient key.

Examples of DiscoveryClient implementations include Spring Cloud Netflix Eureka, Spring Cloud Consul Discovery, and Spring Cloud Zookeeper Discovery.

By default, implementations of DiscoveryClient auto-register the local Spring Boot server with the remote discovery server.

This behavior can be disabled by setting autoRegister=false in @EnableDiscoveryClient.

![[Note]](images/note.png) | Note |

|---|---|

|

Commons creates a Spring Boot HealthIndicator that DiscoveryClient implementations can participate in by implementing DiscoveryHealthIndicator.

To disable the composite HealthIndicator, set spring.cloud.discovery.client.composite-indicator.enabled=false.

A generic HealthIndicator based on DiscoveryClient is auto-configured (DiscoveryClientHealthIndicator).

To disable it, set spring.cloud.discovery.client.health-indicator.enabled=false.

To disable the description field of the DiscoveryClientHealthIndicator, set spring.cloud.discovery.client.health-indicator.include-description=false.

Otherwise, it can bubble up as the description of the rolled up HealthIndicator.

DiscoveryClient interface extends Ordered. This is useful when using multiple discovery

clients, as it allows you to define the order of the returned discovery clients, similar to

how you can order the beans loaded by a Spring application. By default, the order of any DiscoveryClient is set to

0. If you want to set a different order for your custom DiscoveryClient implementations, you just need to override

the getOrder() method so that it returns the value that is suitable for your setup. Apart from this, you can use

properties to set the order of the DiscoveryClient

implementations provided by Spring Cloud, among others ConsulDiscoveryClient, EurekaDiscoveryClient and

ZookeeperDiscoveryClient. In order to do it, you just need to set the

spring.cloud.{clientIdentifier}.discovery.order (or eureka.client.order for Eureka) property to the desired value.

Commons now provides a ServiceRegistry interface that provides methods such as register(Registration) and deregister(Registration), which let you provide custom registered services.

Registration is a marker interface.

The following example shows the ServiceRegistry in use:

@Configuration @EnableDiscoveryClient(autoRegister=false) public class MyConfiguration { private ServiceRegistry registry; public MyConfiguration(ServiceRegistry registry) { this.registry = registry; } // called through some external process, such as an event or a custom actuator endpoint public void register() { Registration registration = constructRegistration(); this.registry.register(registration); } }

Each ServiceRegistry implementation has its own Registry implementation.

ZookeeperRegistrationused withZookeeperServiceRegistryEurekaRegistrationused withEurekaServiceRegistryConsulRegistrationused withConsulServiceRegistry

If you are using the ServiceRegistry interface, you are going to need to pass the

correct Registry implementation for the ServiceRegistry implementation you

are using.

By default, the ServiceRegistry implementation auto-registers the running service.

To disable that behavior, you can set:

* @EnableDiscoveryClient(autoRegister=false) to permanently disable auto-registration.

* spring.cloud.service-registry.auto-registration.enabled=false to disable the behavior through configuration.

There are two events that will be fired when a service auto-registers. The first event, called

InstancePreRegisteredEvent, is fired before the service is registered. The second

event, called InstanceRegisteredEvent, is fired after the service is registered. You can register an

ApplicationListener(s) to listen to and react to these events.

![[Note]](images/note.png) | Note |

|---|---|

These events will not be fired if |

Spring Cloud Commons provides a /service-registry actuator endpoint.

This endpoint relies on a Registration bean in the Spring Application Context.

Calling /service-registry with GET returns the status of the Registration.

Using POST to the same endpoint with a JSON body changes the status of the current Registration to the new value.

The JSON body has to include the status field with the preferred value.

Please see the documentation of the ServiceRegistry implementation you use for the allowed values when updating the status and the values returned for the status.

For instance, Eureka’s supported statuses are UP, DOWN, OUT_OF_SERVICE, and UNKNOWN.

RestTemplate can be automatically configured to use ribbon.

To create a load-balanced RestTemplate, create a RestTemplate @Bean and use the @LoadBalanced qualifier, as shown in the following example:

@Configuration public class MyConfiguration { @LoadBalanced @Bean RestTemplate restTemplate() { return new RestTemplate(); } } public class MyClass { @Autowired private RestTemplate restTemplate; public String doOtherStuff() { String results = restTemplate.getForObject("http://stores/stores", String.class); return results; } }

![[Caution]](images/caution.png) | Caution |

|---|---|

A |

The URI needs to use a virtual host name (that is, a service name, not a host name).

The Ribbon client is used to create a full physical address.

See RibbonAutoConfiguration for details of how the RestTemplate is set up.

WebClient can be automatically configured to use the LoadBalancerClient.

To create a load-balanced WebClient, create a WebClient.Builder @Bean and use the @LoadBalanced qualifier, as shown in the following example:

@Configuration public class MyConfiguration { @Bean @LoadBalanced public WebClient.Builder loadBalancedWebClientBuilder() { return WebClient.builder(); } } public class MyClass { @Autowired private WebClient.Builder webClientBuilder; public Mono<String> doOtherStuff() { return webClientBuilder.build().get().uri("http://stores/stores") .retrieve().bodyToMono(String.class); } }

The URI needs to use a virtual host name (that is, a service name, not a host name). The Ribbon client is used to create a full physical address.

A load-balanced RestTemplate can be configured to retry failed requests.

By default, this logic is disabled.

You can enable it by adding Spring Retry to your application’s classpath.

The load-balanced RestTemplate honors some of the Ribbon configuration values related to retrying failed requests.

You can use client.ribbon.MaxAutoRetries, client.ribbon.MaxAutoRetriesNextServer, and client.ribbon.OkToRetryOnAllOperations properties.

If you would like to disable the retry logic with Spring Retry on the classpath, you can set spring.cloud.loadbalancer.retry.enabled=false.

See the Ribbon documentation for a description of what these properties do.

If you would like to implement a BackOffPolicy in your retries, you need to create a bean of type LoadBalancedRetryFactory and override the createBackOffPolicy method:

@Configuration public class MyConfiguration { @Bean LoadBalancedRetryFactory retryFactory() { return new LoadBalancedRetryFactory() { @Override public BackOffPolicy createBackOffPolicy(String service) { return new ExponentialBackOffPolicy(); } }; } }

![[Note]](images/note.png) | Note |

|---|---|

|

If you want to add one or more RetryListener implementations to your retry functionality, you need to

create a bean of type LoadBalancedRetryListenerFactory and return the RetryListener array

you would like to use for a given service, as shown in the following example:

@Configuration public class MyConfiguration { @Bean LoadBalancedRetryListenerFactory retryListenerFactory() { return new LoadBalancedRetryListenerFactory() { @Override public RetryListener[] createRetryListeners(String service) { return new RetryListener[]{new RetryListener() { @Override public <T, E extends Throwable> boolean open(RetryContext context, RetryCallback<T, E> callback) { //TODO Do you business... return true; } @Override public <T, E extends Throwable> void close(RetryContext context, RetryCallback<T, E> callback, Throwable throwable) { //TODO Do you business... } @Override public <T, E extends Throwable> void onError(RetryContext context, RetryCallback<T, E> callback, Throwable throwable) { //TODO Do you business... } }}; } }; } }

If you want a RestTemplate that is not load-balanced, create a RestTemplate bean and inject it.

To access the load-balanced RestTemplate, use the @LoadBalanced qualifier when you create your @Bean, as shown in the following example:\

@Configuration public class MyConfiguration { @LoadBalanced @Bean RestTemplate loadBalanced() { return new RestTemplate(); } @Primary @Bean RestTemplate restTemplate() { return new RestTemplate(); } } public class MyClass { @Autowired private RestTemplate restTemplate; @Autowired @LoadBalanced private RestTemplate loadBalanced; public String doOtherStuff() { return loadBalanced.getForObject("http://stores/stores", String.class); } public String doStuff() { return restTemplate.getForObject("http://example.com", String.class); } }

![[Important]](images/important.png) | Important |

|---|---|

Notice the use of the |

![[Tip]](images/tip.png) | Tip |

|---|---|

If you see errors such as |

WebClient can be configured to use the LoadBalancerClient. LoadBalancerExchangeFilterFunction is auto-configured if spring-webflux is on the classpath. The following example shows how to configure a WebClient to use load balancer:

public class MyClass { @Autowired private LoadBalancerExchangeFilterFunction lbFunction; public Mono<String> doOtherStuff() { return WebClient.builder().baseUrl("http://stores") .filter(lbFunction) .build() .get() .uri("/stores") .retrieve() .bodyToMono(String.class); } }

The URI needs to use a virtual host name (that is, a service name, not a host name).

The LoadBalancerClient is used to create a full physical address.

Sometimes, it is useful to ignore certain named network interfaces so that they can be excluded from Service Discovery registration (for example, when running in a Docker container).

A list of regular expressions can be set to cause the desired network interfaces to be ignored.

The following configuration ignores the docker0 interface and all interfaces that start with veth:

application.yml.

spring:

cloud:

inetutils:

ignoredInterfaces:

- docker0

- veth.*

You can also force the use of only specified network addresses by using a list of regular expressions, as shown in the following example:

bootstrap.yml.

spring:

cloud:

inetutils:

preferredNetworks:

- 192.168

- 10.0

You can also force the use of only site-local addresses, as shown in the following example: .application.yml

spring:

cloud:

inetutils:

useOnlySiteLocalInterfaces: trueSee Inet4Address.html.isSiteLocalAddress() for more details about what constitutes a site-local address.

Spring Cloud Commons provides beans for creating both Apache HTTP clients (ApacheHttpClientFactory) and OK HTTP clients (OkHttpClientFactory).

The OkHttpClientFactory bean is created only if the OK HTTP jar is on the classpath.

In addition, Spring Cloud Commons provides beans for creating the connection managers used by both clients: ApacheHttpClientConnectionManagerFactory for the Apache HTTP client and OkHttpClientConnectionPoolFactory for the OK HTTP client.

If you would like to customize how the HTTP clients are created in downstream projects, you can provide your own implementation of these beans.

In addition, if you provide a bean of type HttpClientBuilder or OkHttpClient.Builder, the default factories use these builders as the basis for the builders returned to downstream projects.

You can also disable the creation of these beans by setting spring.cloud.httpclientfactories.apache.enabled or spring.cloud.httpclientfactories.ok.enabled to false.

Spring Cloud Commons provides a /features actuator endpoint.

This endpoint returns features available on the classpath and whether they are enabled.

The information returned includes the feature type, name, version, and vendor.

There are two types of 'features': abstract and named.

Abstract features are features where an interface or abstract class is defined and that an implementation the creates, such as DiscoveryClient, LoadBalancerClient, or LockService.

The abstract class or interface is used to find a bean of that type in the context.

The version displayed is bean.getClass().getPackage().getImplementationVersion().

Named features are features that do not have a particular class they implement, such as "Circuit Breaker", "API Gateway", "Spring Cloud Bus", and others. These features require a name and a bean type.

Any module can declare any number of HasFeature beans, as shown in the following examples:

@Bean public HasFeatures commonsFeatures() { return HasFeatures.abstractFeatures(DiscoveryClient.class, LoadBalancerClient.class); } @Bean public HasFeatures consulFeatures() { return HasFeatures.namedFeatures( new NamedFeature("Spring Cloud Bus", ConsulBusAutoConfiguration.class), new NamedFeature("Circuit Breaker", HystrixCommandAspect.class)); } @Bean HasFeatures localFeatures() { return HasFeatures.builder() .abstractFeature(Foo.class) .namedFeature(new NamedFeature("Bar Feature", Bar.class)) .abstractFeature(Baz.class) .build(); }

Each of these beans should go in an appropriately guarded @Configuration.

Due to the fact that some users have problem with setting up Spring Cloud application, we’ve decided to add a compatibility verification mechanism. It will break if your current setup is not compatible with Spring Cloud requirements, together with a report, showing what exactly went wrong.

At the moment we verify which version of Spring Boot is added to your classpath.

Example of a report

*************************** APPLICATION FAILED TO START *************************** Description: Your project setup is incompatible with our requirements due to following reasons: - Spring Boot [2.1.0.RELEASE] is not compatible with this Spring Cloud release train Action: Consider applying the following actions: - Change Spring Boot version to one of the following versions [1.2.x, 1.3.x] . You can find the latest Spring Boot versions here [https://spring.io/projects/spring-boot#learn]. If you want to learn more about the Spring Cloud Release train compatibility, you can visit this page [https://spring.io/projects/spring-cloud#overview] and check the [Release Trains] section.

In order to disable this feature, set spring.cloud.compatibility-verifier.enabled to false.

If you want to override the compatible Spring Boot versions, just set the

spring.cloud.compatibility-verifier.compatible-boot-versions property with a comma separated list

of compatible Spring Boot versions.

1.0.0.BUILD-SNAPSHOT

Spring Cloud Config provides server-side and client-side support for externalized configuration in a distributed system. With the Config Server, you have a central place to manage external properties for applications across all environments.

The concepts on both client and server map identically to the Spring Environment and PropertySource abstractions, so they fit very well with Spring applications but can be used with any application running in any language.

As an application moves through the deployment pipeline from dev to test and into production, you can manage the configuration between those environments and be certain that applications have everything they need to run when they migrate.

The default implementation of the server storage backend uses git, so it easily supports labelled versions of configuration environments as well as being accessible to a wide range of tooling for managing the content.

It is easy to add alternative implementations and plug them in with Spring configuration.

This quick start walks through using both the server and the client of Spring Cloud Config Server.

First, start the server, as follows:

$ cd spring-cloud-config-server $ ../mvnw spring-boot:run

The server is a Spring Boot application, so you can run it from your IDE if you prefer to do so (the main class is ConfigServerApplication).

Next try out a client, as follows:

$ curl localhost:8888/foo/development

{"name":"foo","label":"master","propertySources":[

{"name":"https://github.com/scratches/config-repo/foo-development.properties","source":{"bar":"spam"}},

{"name":"https://github.com/scratches/config-repo/foo.properties","source":{"foo":"bar"}}

]}The default strategy for locating property sources is to clone a git repository (at spring.cloud.config.server.git.uri) and use it to initialize a mini SpringApplication.

The mini-application’s Environment is used to enumerate property sources and publish them at a JSON endpoint.

The HTTP service has resources in the following form:

/{application}/{profile}[/{label}]

/{application}-{profile}.yml

/{label}/{application}-{profile}.yml

/{application}-{profile}.properties

/{label}/{application}-{profile}.propertieswhere application is injected as the spring.config.name in the SpringApplication (what is normally application in a regular Spring Boot app), profile is an active profile (or comma-separated list of properties), and label is an optional git label (defaults to master.)

Spring Cloud Config Server pulls configuration for remote clients from a git repository (which must be provided), as shown in the following example:

spring: cloud: config: server: git: uri: https://github.com/spring-cloud-samples/config-repo

To use these features in an application, you can build it as a Spring Boot application that depends on spring-cloud-config-client (for an example, see the test cases for the config-client or the sample application).

The most convenient way to add the dependency is with a Spring Boot starter org.springframework.cloud:spring-cloud-starter-config.

There is also a parent pom and BOM (spring-cloud-starter-parent) for Maven users and a Spring IO version management properties file for Gradle and Spring CLI users. The following example shows a typical Maven configuration:

pom.xml.

<parent> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-starter-parent</artifactId> <version>{spring-boot-docs-version}</version> <relativePath /> <!-- lookup parent from repository --> </parent> <dependencyManagement> <dependencies> <dependency> <groupId>org.springframework.cloud</groupId> <artifactId>spring-cloud-dependencies</artifactId> <version>{spring-cloud-version}</version> <type>pom</type> <scope>import</scope> </dependency> </dependencies> </dependencyManagement> <dependencies> <dependency> <groupId>org.springframework.cloud</groupId> <artifactId>spring-cloud-starter-config</artifactId> </dependency> <dependency> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-starter-test</artifactId> <scope>test</scope> </dependency> </dependencies> <build> <plugins> <plugin> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-maven-plugin</artifactId> </plugin> </plugins> </build> <!-- repositories also needed for snapshots and milestones -->

Now you can create a standard Spring Boot application, such as the following HTTP server:

@SpringBootApplication

@RestController

public class Application {

@RequestMapping("/")

public String home() {

return "Hello World!";

}

public static void main(String[] args) {

SpringApplication.run(Application.class, args);

}

}When this HTTP server runs, it picks up the external configuration from the default local config server (if it is running) on port 8888.

To modify the startup behavior, you can change the location of the config server by using bootstrap.properties (similar to application.properties but for the bootstrap phase of an application context), as shown in the following example:

spring.cloud.config.uri: http://myconfigserver.com

By default, if no application name is set, application will be used. To modify the name, the following property can be added to the bootstrap.properties file:

spring.application.name: myapp

![[Note]](images/note.png) | Note |

|---|---|

When setting the property |

The bootstrap properties show up in the /env endpoint as a high-priority property source, as shown in the following example.

$ curl localhost:8080/env

{

"profiles":[],

"configService:https://github.com/spring-cloud-samples/config-repo/bar.properties":{"foo":"bar"},

"servletContextInitParams":{},

"systemProperties":{...},

...

}A property source called ``configService:<URL of remote repository>/<file name> contains the foo property with a value of bar and is highest priority.

![[Note]](images/note.png) | Note |

|---|---|

The URL in the property source name is the git repository, not the config server URL. |

Spring Cloud Config Server provides an HTTP resource-based API for external configuration (name-value pairs or equivalent YAML content).

The server is embeddable in a Spring Boot application, by using the @EnableConfigServer annotation.

Consequently, the following application is a config server:

ConfigServer.java.

@SpringBootApplication @EnableConfigServer public class ConfigServer { public static void main(String[] args) { SpringApplication.run(ConfigServer.class, args); } }

Like all Spring Boot applications, it runs on port 8080 by default, but you can switch it to the more conventional port 8888 in various ways.

The easiest, which also sets a default configuration repository, is by launching it with spring.config.name=configserver (there is a configserver.yml in the Config Server jar).

Another is to use your own application.properties, as shown in the following example:

application.properties.

server.port: 8888 spring.cloud.config.server.git.uri: file://${user.home}/config-repo

where ${user.home}/config-repo is a git repository containing YAML and properties files.

![[Note]](images/note.png) | Note |

|---|---|

On Windows, you need an extra "/" in the file URL if it is absolute with a drive prefix (for example, |

![[Tip]](images/tip.png) | Tip |

|---|---|

The following listing shows a recipe for creating the git repository in the preceding example: $ cd $HOME $ mkdir config-repo $ cd config-repo $ git init . $ echo info.foo: bar > application.properties $ git add -A . $ git commit -m "Add application.properties" |

![[Warning]](images/warning.png) | Warning |

|---|---|

Using the local filesystem for your git repository is intended for testing only. You should use a server to host your configuration repositories in production. |

![[Warning]](images/warning.png) | Warning |

|---|---|

The initial clone of your configuration repository can be quick and efficient if you keep only text files in it. If you store binary files, especially large ones, you may experience delays on the first request for configuration or encounter out of memory errors in the server. |

Where should you store the configuration data for the Config Server?

The strategy that governs this behaviour is the EnvironmentRepository, serving Environment objects.

This Environment is a shallow copy of the domain from the Spring Environment (including propertySources as the main feature).

The Environment resources are parametrized by three variables:

{application}, which maps tospring.application.nameon the client side.{profile}, which maps tospring.profiles.activeon the client (comma-separated list).{label}, which is a server side feature labelling a "versioned" set of config files.

Repository implementations generally behave like a Spring Boot application, loading configuration files from a spring.config.name equal to the {application} parameter, and spring.profiles.active equal to the {profiles} parameter.

Precedence rules for profiles are also the same as in a regular Spring Boot application: Active profiles take precedence over defaults, and, if there are multiple profiles, the last one wins (similar to adding entries to a Map).

The following sample client application has this bootstrap configuration:

bootstrap.yml.

spring: application: name: foo profiles: active: dev,mysql

(As usual with a Spring Boot application, these properties could also be set by environment variables or command line arguments).

If the repository is file-based, the server creates an

Environment from application.yml (shared between all clients) and

foo.yml (with foo.yml taking precedence).

If the YAML files have documents inside them that point to Spring profiles, those are applied with higher precedence (in order of the profiles listed).

If there are profile-specific YAML (or properties) files, these are also applied with higher precedence than the defaults.

Higher precedence translates to a PropertySource listed earlier in the Environment.

(These same rules apply in a standalone Spring Boot application.)

You can set spring.cloud.config.server.accept-empty to false so that Server would return a HTTP 404 status, if the application is not found.By default, this flag is set to true.

The default implementation of EnvironmentRepository uses a Git backend, which is very convenient for managing upgrades and physical environments and for auditing changes.

To change the location of the repository, you can set the spring.cloud.config.server.git.uri configuration property in the Config Server (for example in application.yml).

If you set it with a file: prefix, it should work from a local repository so that you can get started quickly and easily without a server. However, in that case, the server operates directly on the local repository without cloning it (it does not matter if it is not bare because the Config Server never makes changes to the "remote" repository).

To scale the Config Server up and make it highly available, you need to have all instances of the server pointing to the same repository, so only a shared file system would work.

Even in that case, it is better to use the ssh: protocol for a shared filesystem repository, so that the server can clone it and use a local working copy as a cache.

This repository implementation maps the {label} parameter of the HTTP resource to a git label (commit id, branch name, or tag).

If the git branch or tag name contains a slash (/), then the label in the HTTP URL should instead be specified with the special string (_) (to avoid ambiguity with other URL paths).

For example, if the label is foo/bar, replacing the slash would result in the following label: foo(_)bar.

The inclusion of the special string (_) can also be applied to the {application} parameter.

If you use a command-line client such as curl, be careful with the brackets in the URL — you should escape them from the shell with single quotes ('').

The configuration server’s validation of the Git server’s SSL certificate can be disabled by setting the git.skipSslValidation property to true (default is false).

spring: cloud: config: server: git: uri: https://example.com/my/repo skipSslValidation: true

You can configure the time, in seconds, that the configuration server will wait to acquire an HTTP connection. Use the git.timeout property.

spring: cloud: config: server: git: uri: https://example.com/my/repo timeout: 4

Spring Cloud Config Server supports a git repository URL with placeholders for the {application} and {profile} (and {label} if you need it, but remember that the label is applied as a git label anyway).

So you can support a “one repository per application” policy by using a structure similar to the following:

spring: cloud: config: server: git: uri: https://github.com/myorg/{application}

You can also support a “one repository per profile” policy by using a similar pattern but with

{profile}.

Additionally, using the special string "(_)" within your {application} parameters can enable support for multiple

organizations, as shown in the following example:

spring: cloud: config: server: git: uri: https://github.com/{application}

where {application} is provided at request time in the following format: organization(_)application.

Spring Cloud Config also includes support for more complex requirements with pattern

matching on the application and profile name.

The pattern format is a comma-separated list of {application}/{profile} names with wildcards (note that a pattern beginning with a wildcard may need to be quoted), as shown in the following example:

spring: cloud: config: server: git: uri: https://github.com/spring-cloud-samples/config-repo repos: simple: https://github.com/simple/config-repo special: pattern: special*/dev*,*special*/dev* uri: https://github.com/special/config-repo local: pattern: local* uri: file:/home/configsvc/config-repo

If {application}/{profile} does not match any of the patterns, it uses the default URI defined under spring.cloud.config.server.git.uri.

In the above example, for the “simple” repository, the pattern is simple/* (it only matches one application named simple in all profiles). The “local” repository matches all application names beginning with local in all profiles (the /* suffix is added automatically to any pattern that does not have a profile matcher).

![[Note]](images/note.png) | Note |

|---|---|

The “one-liner” short cut used in the “simple” example can be used only if the only property to be set is the URI. If you need to set anything else (credentials, pattern, and so on) you need to use the full form. |

The pattern property in the repo is actually an array, so you can use a YAML array (or [0], [1], etc. suffixes in properties files) to bind to multiple patterns.

You may need to do so if you are going to run apps with multiple profiles, as shown in the following example:

spring: cloud: config: server: git: uri: https://github.com/spring-cloud-samples/config-repo repos: development: pattern: - '*/development' - '*/staging' uri: https://github.com/development/config-repo staging: pattern: - '*/qa' - '*/production' uri: https://github.com/staging/config-repo

![[Note]](images/note.png) | Note |

|---|---|

Spring Cloud guesses that a pattern containing a profile that does not end in |

Every repository can also optionally store config files in sub-directories, and patterns to search for those directories can be specified as searchPaths.

The following example shows a config file at the top level:

spring: cloud: config: server: git: uri: https://github.com/spring-cloud-samples/config-repo searchPaths: foo,bar*

In the preceding example, the server searches for config files in the top level and in the foo/ sub-directory and also any sub-directory whose name begins with bar.

By default, the server clones remote repositories when configuration is first requested. The server can be configured to clone the repositories at startup, as shown in the following top-level example:

spring: cloud: config: server: git: uri: https://git/common/config-repo.git repos: team-a: pattern: team-a-* cloneOnStart: true uri: http://git/team-a/config-repo.git team-b: pattern: team-b-* cloneOnStart: false uri: http://git/team-b/config-repo.git team-c: pattern: team-c-* uri: http://git/team-a/config-repo.git

In the preceding example, the server clones team-a’s config-repo on startup, before it accepts any requests. All other repositories are not cloned until configuration from the repository is requested.

![[Note]](images/note.png) | Note |

|---|---|

Setting a repository to be cloned when the Config Server starts up can help to identify a misconfigured configuration source (such as an invalid repository URI) quickly, while the Config Server is starting up.

With |

To use HTTP basic authentication on the remote repository, add the username and password properties separately (not in the URL), as shown in the following example:

spring: cloud: config: server: git: uri: https://github.com/spring-cloud-samples/config-repo username: trolley password: strongpassword

If you do not use HTTPS and user credentials, SSH should also work out of the box when you store keys in the default directories (~/.ssh) and the URI points to an SSH location, such as [email protected]:configuration/cloud-configuration.

It is important that an entry for the Git server be present in the ~/.ssh/known_hosts file and that it is in ssh-rsa format.

Other formats (such as ecdsa-sha2-nistp256) are not supported.

To avoid surprises, you should ensure that only one entry is present in the known_hosts file for the Git server and that it matches the URL you provided to the config server.

If you use a hostname in the URL, you want to have exactly that (not the IP) in the known_hosts file.

The repository is accessed by using JGit, so any documentation you find on that should be applicable.

HTTPS proxy settings can be set in ~/.git/config or (in the same way as for any other JVM process) with

system properties (-Dhttps.proxyHost and -Dhttps.proxyPort).

![[Tip]](images/tip.png) | Tip |

|---|---|

If you do not know where your |

Spring Cloud Config Server also supports AWS CodeCommit authentication. AWS CodeCommit uses an authentication helper when using Git from the command line. This helper is not used with the JGit library, so a JGit CredentialProvider for AWS CodeCommit is created if the Git URI matches the AWS CodeCommit pattern. AWS CodeCommit URIs follow this pattern://git-codecommit.${AWS_REGION}.amazonaws.com/${repopath}.

If you provide a username and password with an AWS CodeCommit URI, they must be the AWS accessKeyId and secretAccessKey that provide access to the repository. If you do not specify a username and password, the accessKeyId and secretAccessKey are retrieved by using the AWS Default Credential Provider Chain.

If your Git URI matches the CodeCommit URI pattern (shown earlier), you must provide valid AWS credentials in the username and password or in one of the locations supported by the default credential provider chain. AWS EC2 instances may use IAM Roles for EC2 Instances.

![[Note]](images/note.png) | Note |

|---|---|

The |

By default, the JGit library used by Spring Cloud Config Server uses SSH configuration files such as ~/.ssh/known_hosts and /etc/ssh/ssh_config when connecting to Git repositories by using an SSH URI.

In cloud environments such as Cloud Foundry, the local filesystem may be ephemeral or not easily accessible.

For those cases, SSH configuration can be set by using Java properties.

In order to activate property-based SSH configuration, the spring.cloud.config.server.git.ignoreLocalSshSettings property must be set to true, as shown in the following example:

spring: cloud: config: server: git: uri: git@gitserver.com:team/repo1.git ignoreLocalSshSettings: true hostKey: someHostKey hostKeyAlgorithm: ssh-rsa privateKey: | -----BEGIN RSA PRIVATE KEY----- MIIEpgIBAAKCAQEAx4UbaDzY5xjW6hc9jwN0mX33XpTDVW9WqHp5AKaRbtAC3DqX IXFMPgw3K45jxRb93f8tv9vL3rD9CUG1Gv4FM+o7ds7FRES5RTjv2RT/JVNJCoqF ol8+ngLqRZCyBtQN7zYByWMRirPGoDUqdPYrj2yq+ObBBNhg5N+hOwKjjpzdj2Ud 1l7R+wxIqmJo1IYyy16xS8WsjyQuyC0lL456qkd5BDZ0Ag8j2X9H9D5220Ln7s9i oezTipXipS7p7Jekf3Ywx6abJwOmB0rX79dV4qiNcGgzATnG1PkXxqt76VhcGa0W DDVHEEYGbSQ6hIGSh0I7BQun0aLRZojfE3gqHQIDAQABAoIBAQCZmGrk8BK6tXCd fY6yTiKxFzwb38IQP0ojIUWNrq0+9Xt+NsypviLHkXfXXCKKU4zUHeIGVRq5MN9b BO56/RrcQHHOoJdUWuOV2qMqJvPUtC0CpGkD+valhfD75MxoXU7s3FK7yjxy3rsG EmfA6tHV8/4a5umo5TqSd2YTm5B19AhRqiuUVI1wTB41DjULUGiMYrnYrhzQlVvj 5MjnKTlYu3V8PoYDfv1GmxPPh6vlpafXEeEYN8VB97e5x3DGHjZ5UrurAmTLTdO8 +AahyoKsIY612TkkQthJlt7FJAwnCGMgY6podzzvzICLFmmTXYiZ/28I4BX/mOSe pZVnfRixAoGBAO6Uiwt40/PKs53mCEWngslSCsh9oGAaLTf/XdvMns5VmuyyAyKG ti8Ol5wqBMi4GIUzjbgUvSUt+IowIrG3f5tN85wpjQ1UGVcpTnl5Qo9xaS1PFScQ xrtWZ9eNj2TsIAMp/svJsyGG3OibxfnuAIpSXNQiJPwRlW3irzpGgVx/AoGBANYW dnhshUcEHMJi3aXwR12OTDnaLoanVGLwLnkqLSYUZA7ZegpKq90UAuBdcEfgdpyi PhKpeaeIiAaNnFo8m9aoTKr+7I6/uMTlwrVnfrsVTZv3orxjwQV20YIBCVRKD1uX VhE0ozPZxwwKSPAFocpyWpGHGreGF1AIYBE9UBtjAoGBAI8bfPgJpyFyMiGBjO6z FwlJc/xlFqDusrcHL7abW5qq0L4v3R+FrJw3ZYufzLTVcKfdj6GelwJJO+8wBm+R gTKYJItEhT48duLIfTDyIpHGVm9+I1MGhh5zKuCqIhxIYr9jHloBB7kRm0rPvYY4 VAykcNgyDvtAVODP+4m6JvhjAoGBALbtTqErKN47V0+JJpapLnF0KxGrqeGIjIRV cYA6V4WYGr7NeIfesecfOC356PyhgPfpcVyEztwlvwTKb3RzIT1TZN8fH4YBr6Ee KTbTjefRFhVUjQqnucAvfGi29f+9oE3Ei9f7wA+H35ocF6JvTYUsHNMIO/3gZ38N CPjyCMa9AoGBAMhsITNe3QcbsXAbdUR00dDsIFVROzyFJ2m40i4KCRM35bC/BIBs q0TY3we+ERB40U8Z2BvU61QuwaunJ2+uGadHo58VSVdggqAo0BSkH58innKKt96J 69pcVH/4rmLbXdcmNYGm6iu+MlPQk4BUZknHSmVHIFdJ0EPupVaQ8RHT -----END RSA PRIVATE KEY-----

The following table describes the SSH configuration properties.

Table 5.1. SSH Configuration Properties

| Property Name | Remarks |

|---|---|

ignoreLocalSshSettings | If |

privateKey | Valid SSH private key. Must be set if |

hostKey | Valid SSH host key. Must be set if |

hostKeyAlgorithm | One of |

strictHostKeyChecking |

|

knownHostsFile | Location of custom |

preferredAuthentications | Override server authentication method order. This should allow for evading login prompts if server has keyboard-interactive authentication before the |

Spring Cloud Config Server also supports a search path with placeholders for the {application} and {profile} (and {label} if

you need it), as shown in the following example:

spring: cloud: config: server: git: uri: https://github.com/spring-cloud-samples/config-repo searchPaths: '{application}'

The preceding listing causes a search of the repository for files in the same name as the directory (as well as the top level). Wildcards are also valid in a search path with placeholders (any matching directory is included in the search).

As mentioned earlier, Spring Cloud Config Server makes a clone of the remote git repository in case the local copy gets dirty (for example, folder content changes by an OS process) such that Spring Cloud Config Server cannot update the local copy from remote repository.

To solve this issue, there is a force-pull property that makes Spring Cloud Config Server force pull from the remote repository if the local copy is dirty, as shown in the following example:

spring: cloud: config: server: git: uri: https://github.com/spring-cloud-samples/config-repo force-pull: true

If you have a multiple-repositories configuration, you can configure the force-pull property per repository, as shown in the following example:

spring: cloud: config: server: git: uri: https://git/common/config-repo.git force-pull: true repos: team-a: pattern: team-a-* uri: http://git/team-a/config-repo.git force-pull: true team-b: pattern: team-b-* uri: http://git/team-b/config-repo.git force-pull: true team-c: pattern: team-c-* uri: http://git/team-a/config-repo.git

![[Note]](images/note.png) | Note |

|---|---|

The default value for |

As Spring Cloud Config Server has a clone of the remote git repository

after check-outing branch to local repo (e.g fetching properties by label) it will keep this branch

forever or till the next server restart (which creates new local repo).

So there could be a case when remote branch is deleted but local copy of it is still available for fetching.

And if Spring Cloud Config Server client service starts with --spring.cloud.config.label=deletedRemoteBranch,master

it will fetch properties from deletedRemoteBranch local branch, but not from master.

In order to keep local repository branches clean and up to remote - deleteUntrackedBranches property could be set.

It will make Spring Cloud Config Server force delete untracked branches from local repository.

Example:

spring: cloud: config: server: git: uri: https://github.com/spring-cloud-samples/config-repo deleteUntrackedBranches: true

![[Note]](images/note.png) | Note |

|---|---|

The default value for |

You can control how often the config server will fetch updated configuration data

from your Git backend by using spring.cloud.config.server.git.refreshRate. The

value of this property is specified in seconds. By default the value is 0, meaning

the config server will fetch updated configuration from the Git repo every time it

is requested.

![[Warning]](images/warning.png) | Warning |

|---|---|

With VCS-based backends (git, svn), files are checked out or cloned to the local filesystem.

By default, they are put in the system temporary directory with a prefix of |

There is also a “native” profile in the Config Server that does not use Git but loads the config files from the local classpath or file system (any static URL you want to point to with spring.cloud.config.server.native.searchLocations).

To use the native profile, launch the Config Server with spring.profiles.active=native.

![[Note]](images/note.png) | Note |

|---|---|

Remember to use the |

![[Warning]](images/warning.png) | Warning |

|---|---|

The default value of the |

![[Tip]](images/tip.png) | Tip |

|---|---|

A filesystem backend is great for getting started quickly and for testing. To use it in production, you need to be sure that the file system is reliable and shared across all instances of the Config Server. |

The search locations can contain placeholders for {application}, {profile}, and {label}.

In this way, you can segregate the directories in the path and choose a strategy that makes sense for you (such as subdirectory per application or subdirectory per profile).

If you do not use placeholders in the search locations, this repository also appends the {label} parameter of the HTTP resource to a suffix on the search path, so properties files are loaded from each search location and a subdirectory with the same name as the label (the labelled properties take precedence in the Spring Environment).

Thus, the default behaviour with no placeholders is the same as adding a search location ending with /{label}/.

For example, file:/tmp/config is the same as file:/tmp/config,file:/tmp/config/{label}.

This behavior can be disabled by setting spring.cloud.config.server.native.addLabelLocations=false.

Spring Cloud Config Server also supports Vault as a backend.

For more information on Vault, see the Vault quick start guide.

To enable the config server to use a Vault backend, you can run your config server with the vault profile.

For example, in your config server’s application.properties, you can add spring.profiles.active=vault.

By default, the config server assumes that your Vault server runs at http://127.0.0.1:8200.

It also assumes that the name of backend is secret and the key is application.

All of these defaults can be configured in your config server’s application.properties.

The following table describes configurable Vault properties:

| Name | Default Value |

|---|---|

host | 127.0.0.1 |

port | 8200 |

scheme | http |

backend | secret |

defaultKey | application |

profileSeparator | , |

kvVersion | 1 |

skipSslValidation | false |

timeout | 5 |

![[Important]](images/important.png) | Important |

|---|---|

All of the properties in the preceding table must be prefixed with |

All configurable properties can be found in org.springframework.cloud.config.server.environment.VaultEnvironmentRepository.

Vault 0.10.0 introduced a versioned key-value backend (k/v backend version 2) that exposes a different API than earlier versions, it now requires a data/ between the mount path and the actual context path and wraps secrets in a data object. Setting kvVersion=2 will take this into account.

With your config server running, you can make HTTP requests to the server to retrieve values from the Vault backend. To do so, you need a token for your Vault server.

First, place some data in you Vault, as shown in the following example:

$ vault kv put secret/application foo=bar baz=bam $ vault kv put secret/myapp foo=myappsbar

Second, make an HTTP request to your config server to retrieve the values, as shown in the following example:

$ curl -X "GET" "http://localhost:8888/myapp/default" -H "X-Config-Token: yourtoken"

You should see a response similar to the following:

{ "name":"myapp", "profiles":[ "default" ], "label":null, "version":null, "state":null, "propertySources":[ { "name":"vault:myapp", "source":{ "foo":"myappsbar" } }, { "name":"vault:application", "source":{ "baz":"bam", "foo":"bar" } } ] }

When using Vault, you can provide your applications with multiple properties sources. For example, assume you have written data to the following paths in Vault:

secret/myApp,dev secret/myApp secret/application,dev secret/application

Properties written to secret/application are available to all applications using the Config Server.

An application with the name, myApp, would have any properties written to secret/myApp and secret/application available to it.